Running NAM on Embedded Hardware: Guide & Insights | TONE3000

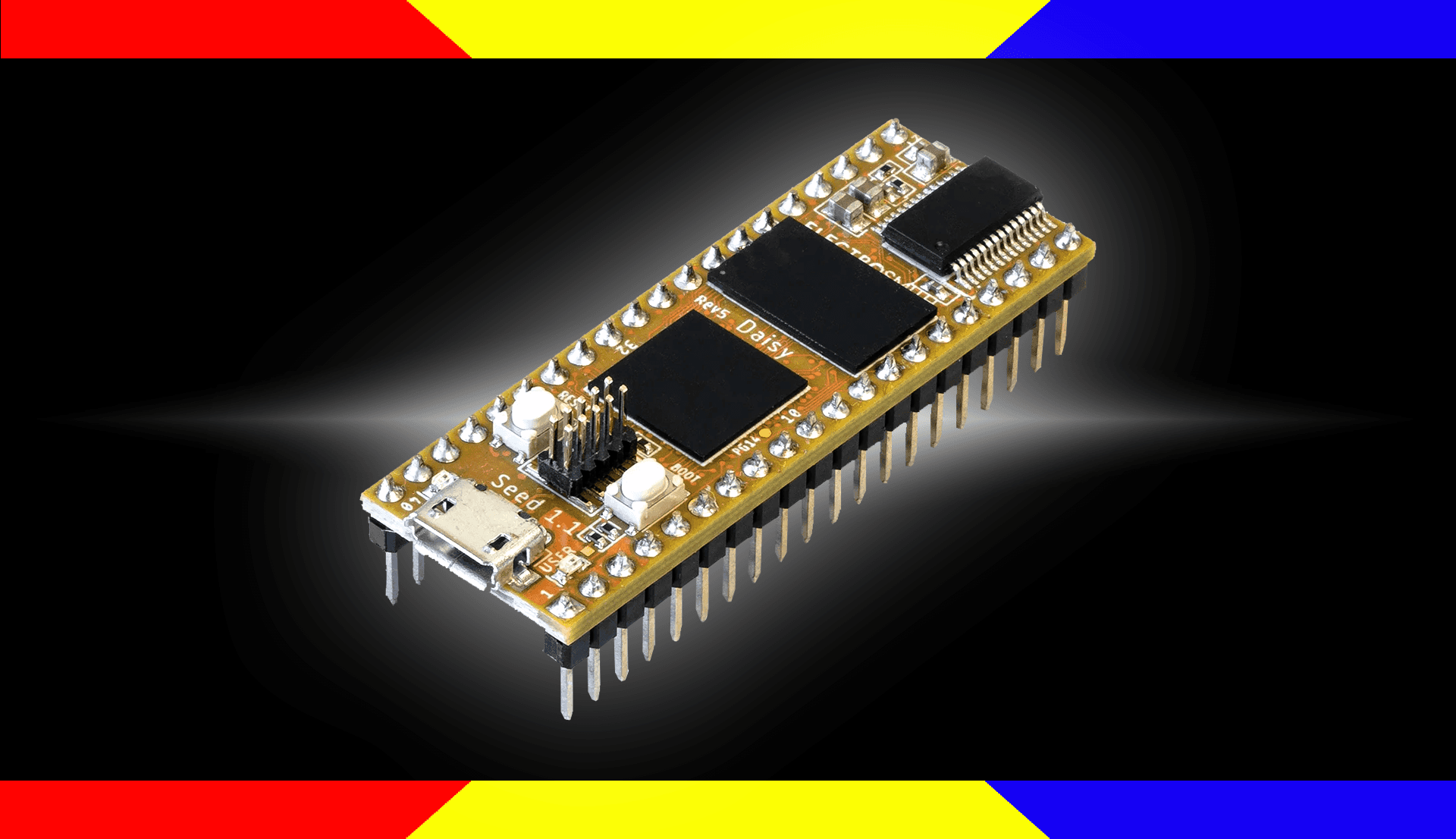

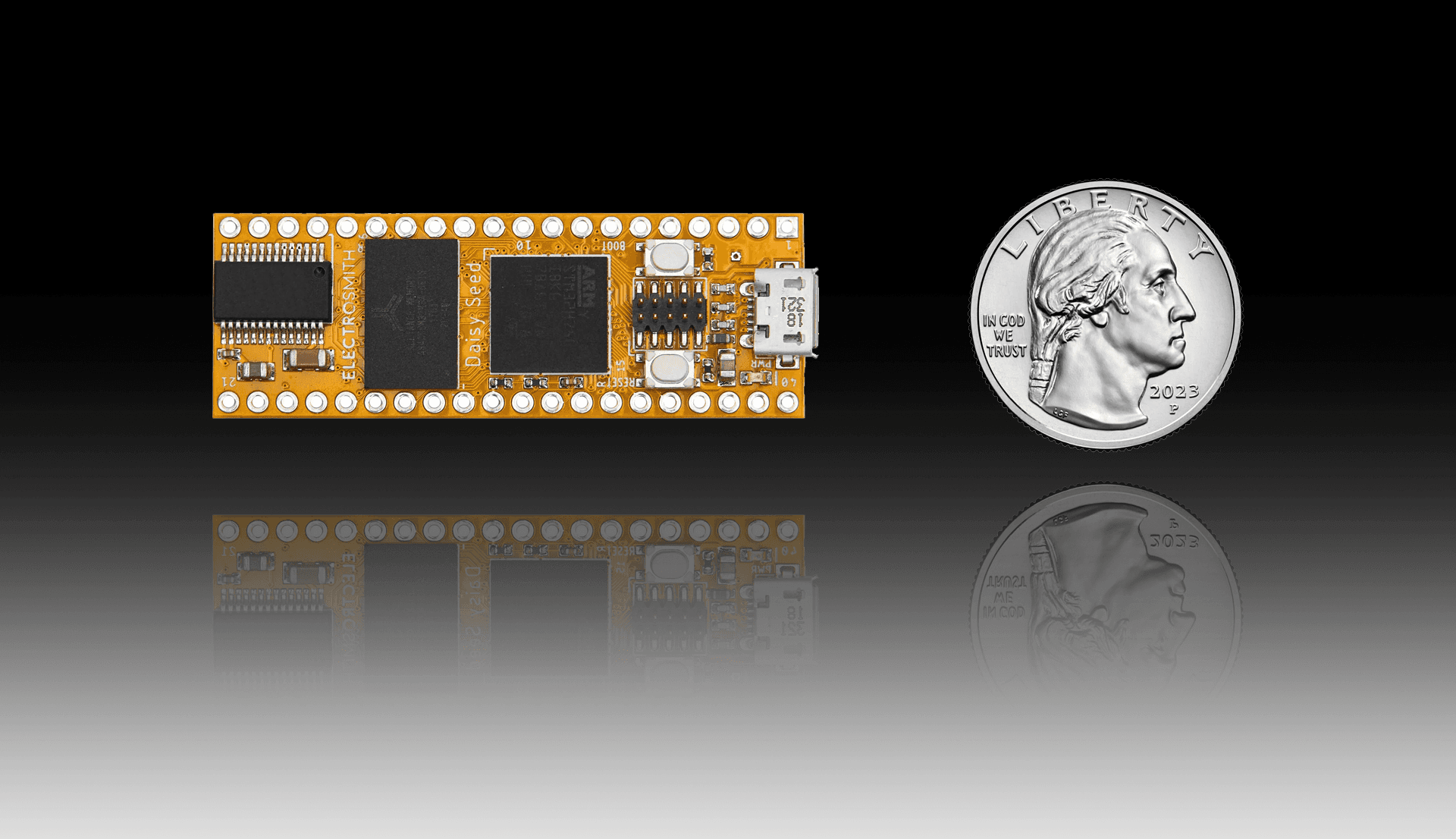

We built a NAM loader for the Electrosmith Daisy Seed: an ARM Cortex-M7 board that's become a popular foundation for DSP-based audio products, from eurorack modules to commercial guitar pedals.

NAM has come a long way from its origins as a desktop plugin. Today it runs on single-board computers, guitar pedals like the Darkglass Anagram, and even web browsers. As we work on Architecture 2 — our next-generation NAM architecture built specifically to run on more hardware — we wanted to go hands-on and understand exactly what embedded NAM deployment looks like in practice.

So we built a NAM loader for the Electrosmith Daisy Seed: an ARM Cortex-M7 board that's become a popular foundation for DSP-based audio products, from eurorack modules to commercial guitar pedals.

Here's what we found...

The challenge: NeuralAmpModelerCore wasn't designed for this

The NeuralAmpModelerCore library has been battle-tested in the NAM plugin — but that plugin runs on a desktop with gigabytes of RAM, an operating system, and no hard deadline on how long processing can take. Embedded hardware is a completely different world: tight memory limits, no OS, and a strict real-time budget that audio simply cannot exceed.

When we first ran our implementation on the Daisy Seed, using a model small enough to fit on the device (A1-Nano with the tanh activation replaced by ReLU) , processing 2 seconds of audio took over 5 seconds of compute time. For a guitar pedal, that's obviously a non-starter.

The problems broke down into three areas: model size (neural networks carry a memory footprint that needs to fit within embedded constraints), compute efficiency (the linear algebra library the codebase relies on wasn't optimized for the small matrix sizes NAM actually uses), and model loading (parsing the standard .nam JSON format on a device with no OS and very limited RAM is harder than it sounds).

What we did about it

We started by profiling the code to understand exactly where time was being spent, rather than guessing. The main bottleneck turned out to be Eigen — specifically, how it handles matrix multiplications for small, fixed-size matrices, which is exactly what NAM inference uses. We added specialized routines tuned to the matrix sizes that actually appear in NAM models, alongside several other targeted improvements.

For the loading problem, we developed a new compact binary model format (which we called “.namb”) designed as a drop-in alternative to .nam for use on embedded devices. The idea is that a companion app — running on your phone or desktop — converts .nam files from the TONE3000 library into the compact format, then transfers them to the device over Bluetooth or USB. No model conversion, no quality loss, just a leaner representation that works within the device's constraints.

For this initial exploration, we used a smaller model variant (A1-nano) with a ReLU activation function in place of tanh, a well-established swap that cuts compute cost significantly. (If you want the full technical breakdown of all of this, including what we tried that didn't work, check out our engineering post.)

Results

After optimization, the same model that originally took over 5 seconds to process 2 seconds of audio now runs in approximately 1.5 seconds — with compute headroom left over for effects processing before and after the NAM block.

More importantly, this work gave us a concrete picture of the embedded NAM problem that directly feeds into A2 design. Slimmable NAM — our approach to letting a single model adapt its compute requirements to the hardware it's running on — is a direct result of what we learned from our partners and observed in this experiment.

If you are interested in more details on these experiments, we are publishing all of the source code that was developed for them, including:

- Merging the numerical optimizations directly into NeuralAmpModelerCore

- Publishing the nam-binary-loader tools and library

- Publishing the example Daisy code as a blueprint for the development of other NAM pedals at nam-pedal

- Detailed discussion of the microbenchmarks, (what worked, what didn't work) at João's blog